Page 4 of 5

Re: Simple Java output Question for 458

Posted: Tue Jul 28, 2009 6:34 pm

by MetaBrain

Before we start, I did it in Java in 5 mins but always WA... So I did same thing in C and got AC...

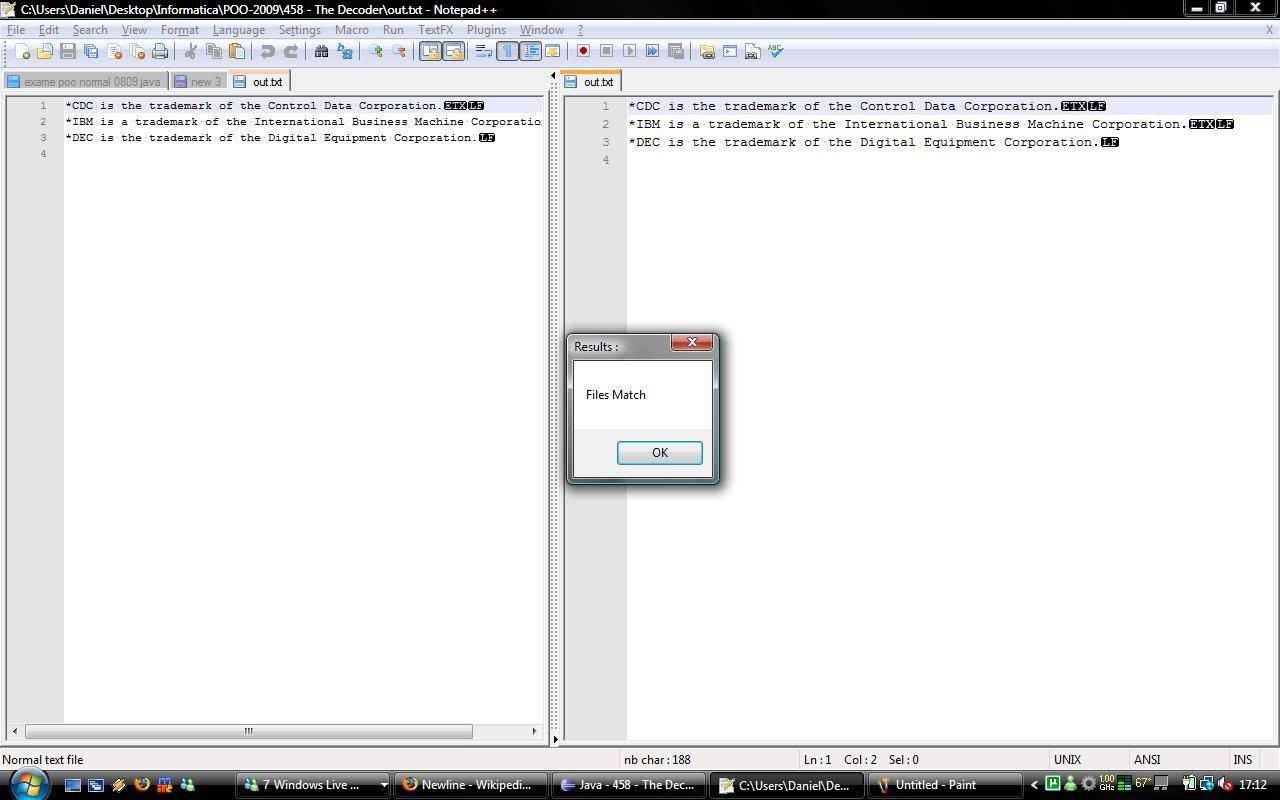

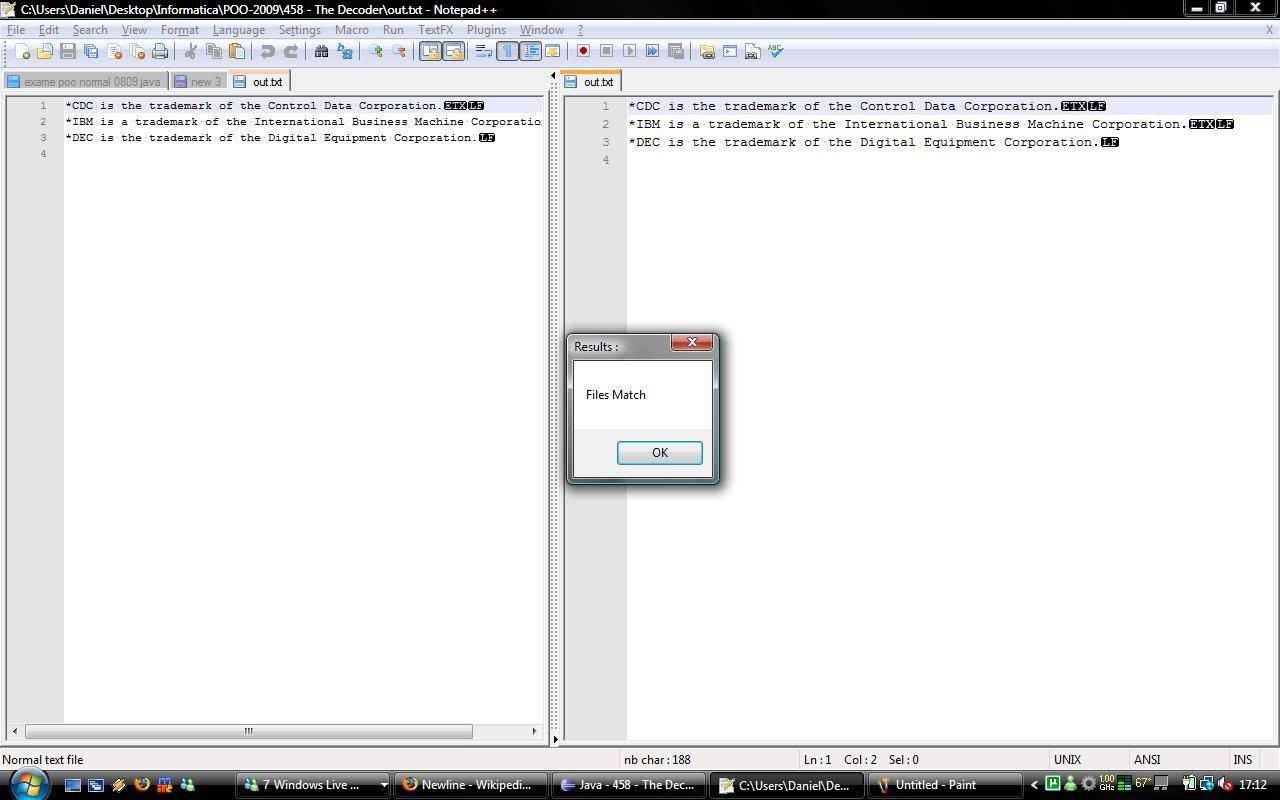

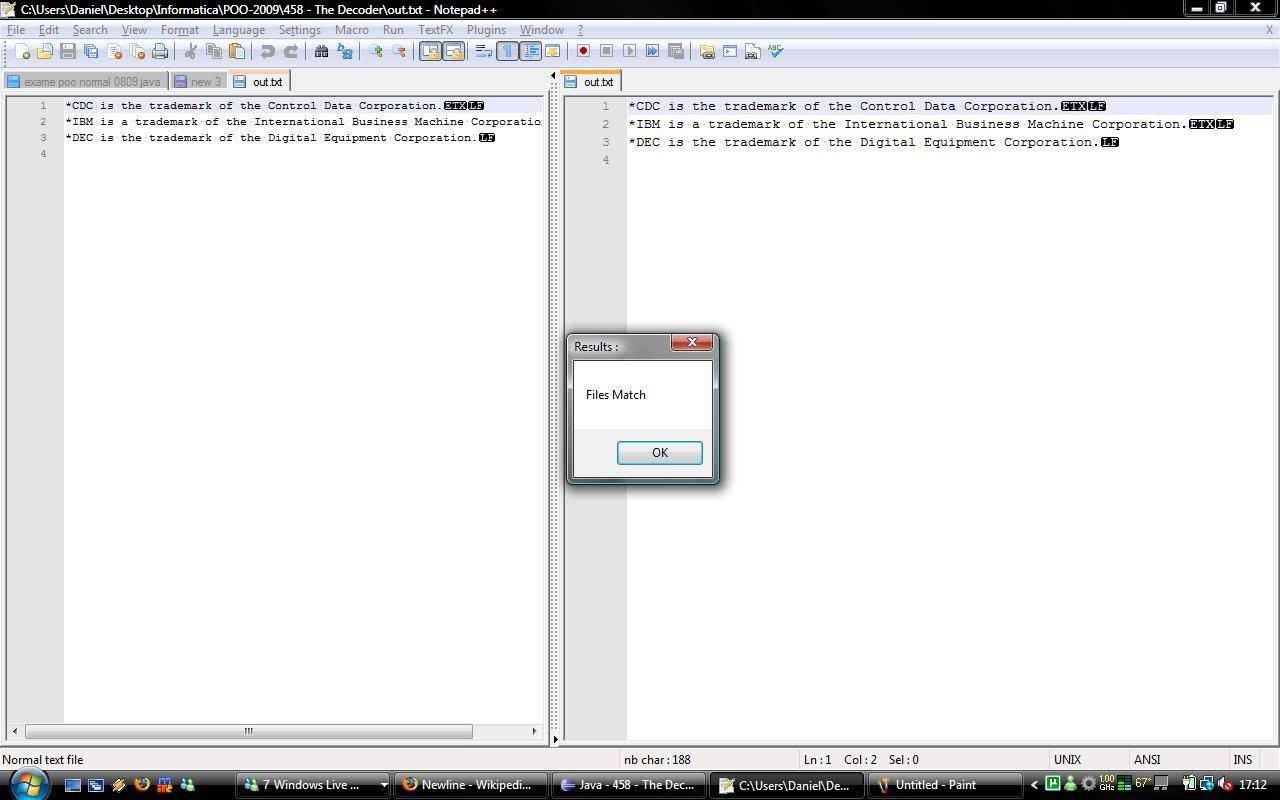

But I don't understand why I get WA, so I put both of em in Notepad++ and the only diference was the End of Line chars.

My C version always made ETX+LF, and the Java one only CR... So I tried, lots of time lost, but I finally managed to duplicate it in Java. I managed to, as the pic proves :

My Java code :

Code: Select all

import java.io.BufferedOutputStream;

import java.io.File;

import java.io.FileNotFoundException;

import java.io.PrintWriter;

import java.util.Scanner;

/**

*

*/

/**

* @author Daniel

*

*/

public class Main {

// static File file = new File("lol.txt") ;

// static File fileout = new File("out.txt") ;

static Scanner in = new Scanner(System.in);

// static PrintWriter output ;//= new PrintWriter(fileout) ;

static PrintWriter output = new PrintWriter(new BufferedOutputStream(System.out));

public static void main(String[] args) throws FileNotFoundException {

// in = new Scanner(file) ;

// output = new PrintWriter(fileout);

while(in.hasNextLine()) {

char[] line = in.nextLine().toCharArray() ;

for(int i = 0 ; i<line.length ; i++) {

output.append((char)(line[i]-7)) ;

}

if(in.hasNext()) {

output.append((char)3);

}

output.append("\n");

output.flush();

}

output.flush();

}

}

So, where I'm I failing? Solving problems in Java is always such a headache... Oh well...

Re: Simple Java output Question for 458

Posted: Tue Jul 28, 2009 6:53 pm

by mf

The input to this problem is not ASCII, it may contain bytes with codes greater than 127.

Your code works with Unicode codepoints (Java's "char" type), but non-ASCII bytes may represent different codepoints, depending on file's encoding. You should either find out how to do binary I/O in Java (java.io.InputStream, I think) and work with raw bytes, or specify an encoding in which Java's char's value would be identically mapped to byte's value.

And this:

output.append((char)3);

certainly looks very wrong.

Re: 458 - The Decoder

Posted: Tue Jul 28, 2009 8:03 pm

by MetaBrain

That char3 is the ETX that my C program puts when compiled and ran...

I think I understood, thanks ! I'll try to do that and report back

Re: Simple Java output Question for 458

Posted: Tue Jul 28, 2009 8:37 pm

by MetaBrain

mf wrote:The input to this problem is not ASCII, it may contain bytes with codes greater than 127.

Your code works with Unicode codepoints (Java's "char" type), but non-ASCII bytes may represent different codepoints, depending on file's encoding. You should either find out how to do binary I/O in Java (java.io.InputStream, I think) and work with raw bytes, or specify an encoding in which Java's char's value would be identically mapped to byte's value.

And this:

output.append((char)3);

certainly looks very wrong.

7279339 458 The Decoder Accepted JAVA 0.256 2009-07-28 18:35:05

OMFG, thanks so much, I'd never figure it out alone

To anyone trying to figure this out, try

static DataInputStream in = new DataInputStream(System.in);

static DataOutputStream output = new DataOutputStream(System.out) ;

And the rest is pretty easy from there

Re: Simple Java output Question for 458

Posted: Fri Dec 25, 2009 11:22 am

by Ivan Goroun

mf wrote:The input to this problem is not ASCII, it may contain bytes with codes greater than 127.

It doesn't according to the description, and it doesn't actually. My program just printf's (char - secret value) and it's accepted.

458 - what's wrong with this code? :(

Posted: Sun Jan 03, 2010 11:30 am

by irtizaur

i am continuously getting WA. I can't figure the problem

somebody help...

Code: Select all

#include <iostream>

using namespace std;

int main()

{

char ch;

while(cin>>ch)

{

if(ch=='\n'||ch=='\r')

{

cout<<ch;

}

else

{

ch = (char)(ch - 7);

cout<<ch;

}

}

return 0;

}

Re: Simple Java output Question for 458

Posted: Tue Dec 21, 2010 8:40 am

by snoopyhs

MetaBrain wrote:mf wrote:The input to this problem is not ASCII, it may contain bytes with codes greater than 127.

Your code works with Unicode codepoints (Java's "char" type), but non-ASCII bytes may represent different codepoints, depending on file's encoding. You should either find out how to do binary I/O in Java (java.io.InputStream, I think) and work with raw bytes, or specify an encoding in which Java's char's value would be identically mapped to byte's value.

And this:

output.append((char)3);

certainly looks very wrong.

7279339 458 The Decoder Accepted JAVA 0.256 2009-07-28 18:35:05

OMFG, thanks so much, I'd never figure it out alone

To anyone trying to figure this out, try

static DataInputStream in = new DataInputStream(System.in);

static DataOutputStream output = new DataOutputStream(System.out) ;

And the rest is pretty easy from there

Hi MetaBrain, how did u do it? I tried to use DataInputStream and DataOutputStream like this and I got Runtime Error:

I still don't really get how to convert stream of bytes to ASCII characters properly, so please anybody give some clarification? Thank you very much.

Code: Select all

import java.io.*;

/**

*

* @author Raymond HS

*/

public class Main {

public static void main(String[] args) throws Exception {

DataInputStream d = new DataInputStream(System.in);

DataOutputStream o = new DataOutputStream(System.out);

byte b;

String str;

while ((b = d.readByte()) != -1) {

if (b == 10) {

o.writeByte(10);

} else {

o.writeByte(b - 7);

}

o.flush();

}

}

}

458 Decoder , Why time limit exceede

Posted: Mon Jan 02, 2012 8:35 am

by Nisan

I can't reduce run time , But i got TLE, please someone help me..

my code is :

# include <iostream>

# include <string>

# include <vector>

using namespace std;

int main()

{

vector <char> symbol;

char ch;

int i = 0,j;

while(!cin.eof())

{

cin.peek();

while(((ch = cin.get())!='\n')&&(ch != 17))

{

symbol.push_back(ch);

}

if(ch == 17)

break;

symbol.push_back('\n');

}

//symbol.push_back(NULL);

j = symbol.size();

symbol[j+1] = NULL;

for(i =0 ; i<symbol.size();i++)

{

if(symbol >=42&&symbol<127){

symbol =symbol-7;

cout<<symbol;

}

if(symbol =='\n')

cout<<"\n";

}

return 0;

}

Re: 458 Decoder , Why time limit exceede

Posted: Thu Jan 05, 2012 6:47 pm

by leobonalume

Nisan wrote:I can't reduce run time , But i got TLE, please someone help me..

Reading and printing one character at a time is slow, and this problem has a big input. You could try reading the whole line once, manipulate the string and then print it all once aswell. Something like this:

while (readString != EOF)

{

for (i = 0; string

; i++)

{

//manipulate string...

}

printString;

}

Re: 458 Decoder , Why time limit exceede

Posted: Sun Apr 08, 2012 6:14 pm

by Mahabub Khan

Where is the problem in my code

It is showing compiler error

#include<iostream>

#include<String>

using namespace std;

int main()

{

string line;

int i;

while(cin >> line)

{

for(i =0; line != '\0' ; ++i)

{

if((line >= 'H' && line <= 'Z') || (line >= 'h' && line <= 'z'))

putchar(line - 7);

else if(line == '{')

putchar('t');

else if(line == '1')

putchar('*');

else if(line == '`')

putchar(' ');

else if(line == '5')

putchar('.');

else if(line[i] == '|')

putchar('u');

}

}

return 0;

}

Re: 458 Decoder , Why time limit exceede

Posted: Mon Apr 09, 2012 10:34 pm

by brianfry713

#include <string>

not

#include <String>

why WA for 458

Posted: Wed Jun 13, 2012 12:06 pm

by esharif

I thing I'm in beginner label programming, let see the code:

Code: Select all

#include<stdio.h>

#include<string.h>

int main()

{

int c;

while((c=getchar()) !='\n')

{

if(c-7<0 && c-7>127)

break;

printf("%c", c-7);

}

printf("\n");

return 0;

}

Re: why WA for 458

Posted: Wed Jun 13, 2012 11:28 pm

by brianfry713

You need to be able to handle multiple lines of input.

Re: why WA for 458

Posted: Sun Jan 19, 2014 11:57 am

by Shihab

AC

458 Cannot understand why WA

Posted: Sat Apr 05, 2014 1:28 am

by lordalaqua

Hello, I was eventually able to solve this problem using std::getline() for strings, but my first attempt was like this:

Code: Select all

#include<iostream>

int main()

{

do

{

char input;

input = std::cin.get();

char output;

if(31 < input && input < 127) //Printable ASCII characters

{

output = input - 7;

}

else

{

output = input;

}

if(!std::cin.eof()) // avoids double output of last character

{

std::cout<<output;

}

}while(!std::cin.eof());

}

The output for the test case is exactly the same, I don't see when would this code fail to solve the problem. If anyone could help I would really appreciate it.